One of our clients was spending five full days on every manual deployment — not because they lacked talent, but because their Octopus Deploy environment had never been properly assessed or updated since it was first stood up years earlier. Tentacles (Octopus's deployment agents) had accumulated. Integrations had drifted. And nobody had stopped long enough to ask whether any of it still made sense. If that sounds familiar, you're not alone. Aging, unexamined Octopus Deploy environments are one of the most consistent problems we see across enterprise teams in healthcare, financial services, insurance, energy, and beyond.

Here's how we help teams fix that — and what's possible on the other side.

Phase I: Assessing Your Octopus Deploy Environment Before Migration

When a client comes to us with an aging Octopus Deploy environment, we don't start by recommending an immediate upgrade. We start by looking carefully at what is already there.

Our Octopus Deploy Migration Planning engagement — designed specifically for complex environments — gives teams a complete picture before a single migration step is taken. This includes:

- Analysis of the existing Octopus Deploy instance — what version, what configuration, what integrations, what's working and what's fragile

- Analysis of the software being deployed and the nature of the environments being deployed to (cloud, hybrid, on-prem)

- Sequencing of major migration or upgrade steps so nothing falls through the cracks

- Best practices recommendations tailored to your architecture

In Phase I, our team conducts a thorough technical analysis of the client's Octopus Deploy environment, assesses and prioritizes their existing application portfolio across multiple environments, evaluates future applications as candidates for migration, and produces a formal migration plan with documented steps and a timeline. Trying to determine whether to stay on-prem or move to the cloud? We will uncover the information you need to make a confident decision.

The output of Phase I isn't just a document — it's the strategic foundation that makes Phase II possible without unnecessary risk.

Phase II: Proof of Concept for Your Octopus Deploy Migration

With the migration plan from Phase I, we move into Phase II: Proof of Concept Implementation.

Rather than attempting a full migration all at once, we use the POC approach to validate assumptions, surface any environment-specific surprises, and demonstrate end-to-end success for one application before scaling. This single-application migration serves as the proving ground — testing the target Octopus Deploy environment setup, validating deployment pipelines, and building client team confidence in the approach.

A well-run POC de-risks the broader migration, gives stakeholders something concrete to evaluate, and creates a replicable pattern your team can follow for every subsequent application.

Phase III: Full Portfolio Migration with Octopus Deploy

Following Phases I and II, you'll have something most teams never start with: a validated migration pattern, a target environment that's been proven to work, and a team that has already done this once successfully. That changes everything about how the remaining portfolio gets migrated.

At that point, we'll present a full estimate for converting the remainder of your applications to Octopus Deploy. From there, you have options. Our team can execute the full migration on your behalf, or your team can take on the work directly with our experts advising alongside them. What doesn't change regardless of the path: your team will understand exactly how the work is done, with detailed documentation and hands-on advisement until they're fully confident managing the environment on their own.

Octopus Deploy Training: Up-Leveling Your Team for Long-Term Success

Deploying a new version of Octopus is only as valuable as your team's ability to use it well. That's why team enablement is built into every Clear Measure engagement — not treated as an afterthought.

We meet teams where they are. If your team is new to Octopus or needs to reset some ingrained habits, a focused 2-hour orientation gets everyone aligned quickly. For organizations ready to go deeper, a full day of platform engineering planning helps connect architecture decisions to long-term delivery goals. And for enterprise teams looking to build advanced expertise and deploy at scale, we offer pro-level training customized to your environment and roadmap.

As Clear Measure Chief Architect Jeffrey Palermo puts it:

"There isn't anything Octopus can't deploy. But if automated DevOps is new to your team, make sure to plan your platform engineering properly. Empower your team to establish quality, achieve stability, and increase speed of delivery."

Learn more about how we work with

Octopus Deploy across industries and team sizes.

What Our Clients Are Saying

"It was taking our team five days to do a proper manual deployment, so I decided it was time to move automation to the next level. By increasing the utilization of Octopus Deploys automation features from 10% to 80%, the company has increased productivity by over 84%. Now we have more efficiency and accuracy. It's a completely different deployment experience." — SVP of Operations, Alphapoint

"Our old method of deployments was cumbersome on our IT team, and required significant time and stress. Clear Measure helped us set up an Octopus Deploy configuration that allows us to initiate mid-day deployments, saving time we would have normally spent after-hours to do a deployment." — Frontier

How Octopus Deploy Fits Into a Modern AI DevOps Architecture

Upgrading Octopus Deploy is one piece of a larger picture. At Clear Measure, we view deployment tooling as a core component of a modern AI DevOps architecture: the interconnected system of pipelines, automation, feedback loops, and intelligence that allows engineering teams to deliver software reliably and rapidly.

When your Octopus Deploy instance is current, properly configured, and correctly integrated with your build servers and cloud environments, it becomes the foundation that makes AI-assisted delivery possible — automated validation, complete auditability, and the kind of deployment speed that lets engineers focus on architecture and innovation instead of firefighting. Without that foundation, even the best AI tooling has nothing reliable to build on.

To see what this looks like end-to-end,

download our AI DevOps Architecture Poster — a print-ready reference designed for .NET and Azure engineering teams.

Octopus Deploy Migration Results: Real Client Outcomes

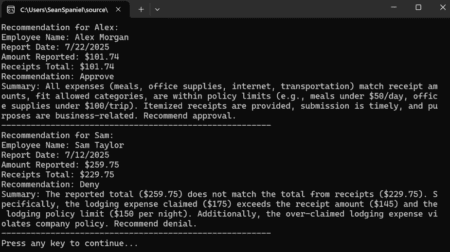

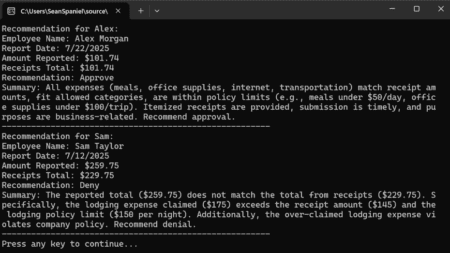

The numbers speak for themselves. In one engagement with a FinTech firm, new environments that previously took days to provision were up and running in 4 hours or less, features could be deployed on demand, and overall team productivity increased by 84%.

Read the full case study: Optimized DevOps Roadmap to Deliver Faster Results

In another engagement, a supply chain management company with over 200 employees eliminated tedious manual deployments entirely — gaining the flexibility to deploy mid-day without disruption, proactively catching errors before they reached production, and freeing their IT team from the after-hours grind that had become the norm.

Read the full case study: Streamline Deployments and Reduce Cycle Time

Both transformations started with the same foundational work — assessing what existed, planning the right path forward, and proving it out before scaling. The pattern holds across every industry we work in:

- Inspect the existing environment with honesty and rigor

- Plan the migration before touching anything

- Prove the approach with a contained POC

- Up-level the team to maximize their investment in Octopus Deploy

- Iterate through remaining applications with confidence

The result isn't just a newer version of Octopus Deploy. It's a team that understands their deployment platform, a pipeline that reflects current best practices, and an organization positioned to move faster with less risk.

Start Your Octopus Deploy Migration Planning Today

If your team is running an older self-hosted Octopus Deploy instance and isn't sure where to start, the best first step is a clear-eyed look at what you actually have — an honest technical assessment that tells you where your environment stands, what risk is accumulating, and what a better future state looks like.

That's exactly what our Octopus Deploy Migration Planning engagement is designed to deliver. Explore our Octopus Deploy practice to learn more, or contact us to start the conversation.