Semantic search is becoming an essential capability in modern applications, and with recent advancements in SQL Server and Azure AI Services, it’s now easier than ever to build intelligent search features directly into .NET apps.

With SQL Server 2025, developers gain native support for vector data types, enabling similarity search over high-dimensional data right in the database—no external services or infrastructure needed.

In this post, we explore how to build a custom RAG (Retrieval-Augmented Generation) pipeline by combining Azure AI Vision and an Azure AI Foundry embedding model to power semantic photo search:

At query time, the process is repeated:

This setup allows you to build smart, searchable applications entirely within the .NET and SQL Server ecosystem—without managing a separate vector database or third-party search service.

The code used in this post can be found on Github:

https://github.com/sspaniel-cm/dotnet-rag

To follow along or implement this solution, you’ll need access to a few key resources:

With these in place, you’re ready to build a vector-powered semantic search experience using .NET and SQL Server.

We’ll start by creating a database and table to store the image metadata, captions, and their corresponding embedding vectors. SQL Server 2025 supports the new VECTOR data type, allowing us to store high-dimensional float arrays directly in the table. For this post, the latest SQL Server 2025 Docker image was used, which can be found here:

https://hub.docker.com/r/microsoft/mssql-server

IF DB_ID('DotnetRAG') IS NULL

BEGIN

CREATE DATABASE DotnetRAG;

END

USE DotnetRAG;

IF OBJECT_ID('dbo.PhotoEmbedding', 'U') IS NULL

BEGIN

CREATE TABLE dbo.PhotoEmbedding (

PhotoEmbeddingId INT IDENTITY(1,1) PRIMARY KEY,

PhotoPath VARCHAR(MAX) NOT NULL,

Caption VARCHAR(MAX) NOT NULL,

Vector VECTOR(256) NOT NULL

);

END

Next, we generate a caption for each image using Azure AI Vision. You can send either a URL or an image file as input. Here’s how to send an image file and receive a caption:

NuGet package: https://www.nuget.org/packages/Azure.AI.Vision.ImageAnalysis

var photoData = await File.ReadAllBytesAsync(photoEmbedding.PhotoPath);

var captionResult = await imageAnalysisClient.AnalyzeAsync(

new BinaryData(photoData),

VisualFeatures.Caption

);

photoEmbedding.Caption = captionResult?.Value?.Caption?.Text ?? string.Empty;

photoEmbedding.Caption = photoEmbedding.Caption.StartsWith("a ")

? photoEmbedding.Caption[2..]

: photoEmbedding.Caption;

Using the AzureAI Foundry embed-v-4-0 model, a semantic vector is generated from the caption. For simplicity, we’ll define the output as a 256-dimensional float array, though the model can capture more semantic detail with vectors up to 1536 dimensions.

NuGet package: https://www.nuget.org/packages/Azure.AI.Inference

var options = new EmbeddingsOptions([photoEmbedding.Caption]);

options.Model = "embed-v-4-0";

options.Dimensions = 256;

var embeddingResult = await embeddingsClient.EmbedAsync(options);

photoEmbedding.Vector = embeddingResult?.Value?.Data?.FirstOrDefault()?.Embedding?.ToObjectFromJson<float[]>() ?? [];

After generating the vector, we convert it to a JSON array and insert it into SQL Server along with the file name and caption. Here’s how it looks with Dapper:

NuGet package: https://www.nuget.org/packages/dapper

var sql = @"

INSERT INTO DotnetRAG.dbo.PhotoEmbedding (PhotoPath, Caption, Vector)

VALUES (@PhotoPath, @Caption, @VectorJson);";

var parameters = new {

photoEmbedding.PhotoPath,

photoEmbedding.Caption,

VectorJson = "[" + string.Join(", ", photoEmbedding.Vector.Select(f => f.ToString("R"))) + "]"

};

await sqlConnection.ExecuteAsync(sql, parameters);

With your data in place, you can start searching. However, scanning the entire table for the nearest vectors (i.e., brute-force KNN) is slow and doesn’t scale well.

SQL Server 2025 introduces graph-based vector indexes, which are optimized for similarity search using the VECTOR_SEARCH function. This index significantly improves performance and resource efficiency.

Why Use a Vector Index?

DROP INDEX IF EXISTS PhotoEmbeddingIndex ON DotnetRAG.dbo.PhotoEmbedding;

CREATE VECTOR INDEX PhotoEmbeddingIndex ON DotnetRAG.dbo.PhotoEmbedding(Vector)

WITH (METRIC = 'cosine', TYPE = 'diskann');

To learn more about creating vector indexes, visit:

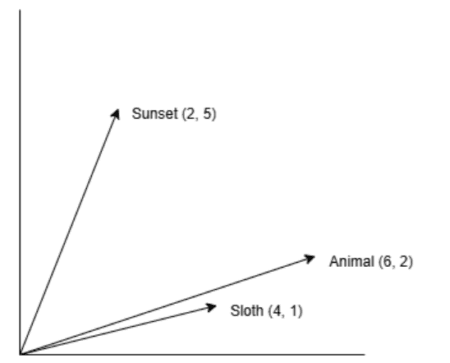

Cosine similarity is used in semantic search to determine how similar two pieces of text are based on their semantic meaning. This is done by measuring the cosine of the angle between their corresponding non-zero vectors in a multi-dimensional space. Text that is semantically similar will have embedded vectors pointing in the same direction, resulting in distances near zero when measured by the VECTOR_SEARCH function. The closer the distance is to zero, the more similar the texts are.

For example, by using two-dimensional embedding vectors for the words “Sunset,” “Animal,” and “Sloth,” we can plot these vectors as coordinates to visualize their relationships. Measuring the angles between “Sloth” and “Sunset” versus “Sloth” and “Animal” clearly shows that “Sloth” is more semantically aligned to “Animal,” as their vectors point in a similar direction. This enables software to return a photo of a sloth, even if the user searched for “animal.”

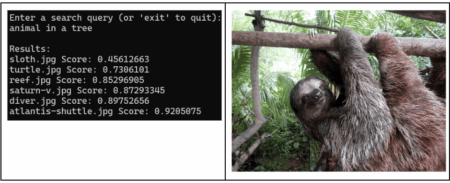

While humans perceive the physical in three dimensions, making it difficult to visualize 256 or even 1536 dimensions, these higher dimensions are vital for embedding small to large bodies of text. They capture more information, which in turn powers more complex semantic searches. For instance, a search query like “animal in a tree” could yield a photo captioned “sloth hanging from a tree branch”.

To learn more about the VECTOR_SEARCH function visit:

var query = "animal in a tree";

var options = new EmbeddingsOptions([query]);

options.Model = "embed-v-4-0";

options.Dimensions = 256;

var embeddingResult = await embeddingsClient.EmbedAsync(options);

var vector = embeddingResult?.Value?.Data?.FirstOrDefault()?.Embedding?.ToObjectFromJson<float[]>();

if (vector == null || vector.Length <= 0)

{

throw new InvalidOperationException($"Failed to generate embedding for '{query}'.");

}

var vectorJson = "[" + string.Join(", ", vector.Select(f => f.ToString("R"))) + "]";

sql = $@"

DECLARE @vector VECTOR({EmbeddingOrchestrator.EmbeddingDimensions}) = CAST(@VectorJson AS VECTOR({EmbeddingOrchestrator.EmbeddingDimensions}));

SELECT photoEmbedding.PhotoPath, searchResults.distance

FROM VECTOR_SEARCH(

TABLE = DotnetRAG.dbo.PhotoEmbedding AS photoEmbedding,

COLUMN = Vector,

SIMILAR_TO = @vector,

METRIC = 'cosine',

TOP_N = {6}

) AS searchResults

ORDER BY searchResults.distance;"

var searchResults = await sqlConnection.QueryAsync<PhotoEmbeddingSearchResultItem>(sql, new { vectorJson });

Console.WriteLine();

Console.WriteLine("Results:");

foreach (var resultItem in searchResults)

{

Console.WriteLine($"{Path.GetFileName(resultItem.PhotoPath)} Score: {resultItem.Distance}");

}

This post demonstrates how to build a custom RAG pipeline for semantic photo search using SQL Server 2025, Azure AI Vision, and Azure AI Foundry. By leveraging native vector support in SQL Server, you can create efficient and scalable semantic search applications directly within the .NET ecosystem.

Building on this, when implementing your own solutions, I suggest incorporating distance thresholds. This will help filter out results that are too far from the query vector, thereby removing semantically dissimilar items and ensuring users receive only the most relevant results.